Deep Sea VR Experience

In the first year of my master Interaction Technology, I followed a course called "Designing Interactive Experiences", or DIE, if you will. The task was to create a memorable, interactive experience. This was during the peak of the corona crisis, so everybody was quarantined and worked from home. This inspired our group to create a product that would allow people to experience something else than the environment that they're stuck in, add some variety. I personally really like the concept of allowing people to go somewhere they couldn't go before. For example, instead of letting people experience what it's like to have a drink at a bar again, have them experience what it's like to be in space, or at the bottom of the ocean. We eventually chose the latter. The whole experience takes the form of an Android application for the Google Cardboard VR headset.

Fishes & Fish AI

The first thing I made were the fishes. We brought our animated fishes, skyboxes, landscaping assets like coral and rocks, and even a sunken ship from the Unity Asset Store. This saved us a huge amount of time on modelling, texturing, rigging, and animating. The hardest part here was fixing all the orientations and sizes of the fishes so that they face forwards and are properly scaled. We have over 100 different types of sea creatures available in our simulation right now, including tropical fishes, sharks, octopuses, squids, whales, stingrays, turtles, snakes, shrimp, otters, ducks, sea lions, starfish, jellyfish, scary deep sea fish, and even prehistoric fishes.

The fish AI was a tricky part, since every fish is controlled via the same script, even though different behavior is expected of them. Right now, it works as follows: Selected fishes are spawned randomly within a predefined area in the desired amount. The environment is filled with invisible waypoints. This allows me to create certain points of interest, like coral reefs. The fish AI script starts searching for a waypoint to swim to. The waypoint should be active and also in the line of sight of the fish. If there is something between the fish and the waypoint, the fish AI will not accept it. This prevents the fishes from swimming through the terrain.

The fishes will then swim towards the waypoint, as well as turn so that they are facing in the direction of the waypoint. This was actually quite difficult, because this could cause the fishes to swim sideways at times when they weren't oriented towards the waypoint yet. I fixed this by adjusting their script. Now, they constantly move forwards, and constantly turn to try and face the waypoint. This gives them smoother turns and more natural behavior. This also makes the invisible waypoints a bit more natural and harder to detect, since the fishes may circle in and out both ways (to their right or left).

The waypoints that are too far away from the player are deactivated. This stops the fish from straying too far away from the player in a passive, unnoticeable way. We don't want to spend our processing power moving fish the player can't see. The fishes can also bump into each other. When this happens, there is a random chance that either of the fish will change direction (find a new waypoint). The larger the fish, the lower this chance. So if a clownfish bumps into a whale, the clownfish is very likely to change their direction, but the whale is likely to just keep swimming and ignore the clownfish.

Terrain

This project was my first experience with making terrain in Unity. This is actually really fun, and our underwater environment allows for a huge amount of creativity here. The environment itself is filled with colorful coral, kelp plants, sandy parts, rock structures with a lot of verticality, and stationary animals such as starfish, oysters, and mussels. Assets like coral and rocks were also brought from the Unity Asset Store.

The water surface was created using a script-generated mesh with a 2-sided shader, which is semi-transparent. This mesh is then moved via script using Perlin-noise. The script also moves the texture itself. We added some camera and lighting effects to create an underwater effect. If the player is above the surface, we set the camera clear flag (anything on the camera that doesn't have an object in front of it) to an ocean skybox. If the player goes underwater, we set the camera clear flag to a solid blue-greenish color. We also enable some fog, to recreate the effect of semi-transparent water and reduce GPU stress. The player also doesn't emit air bubbles when above water.

Story

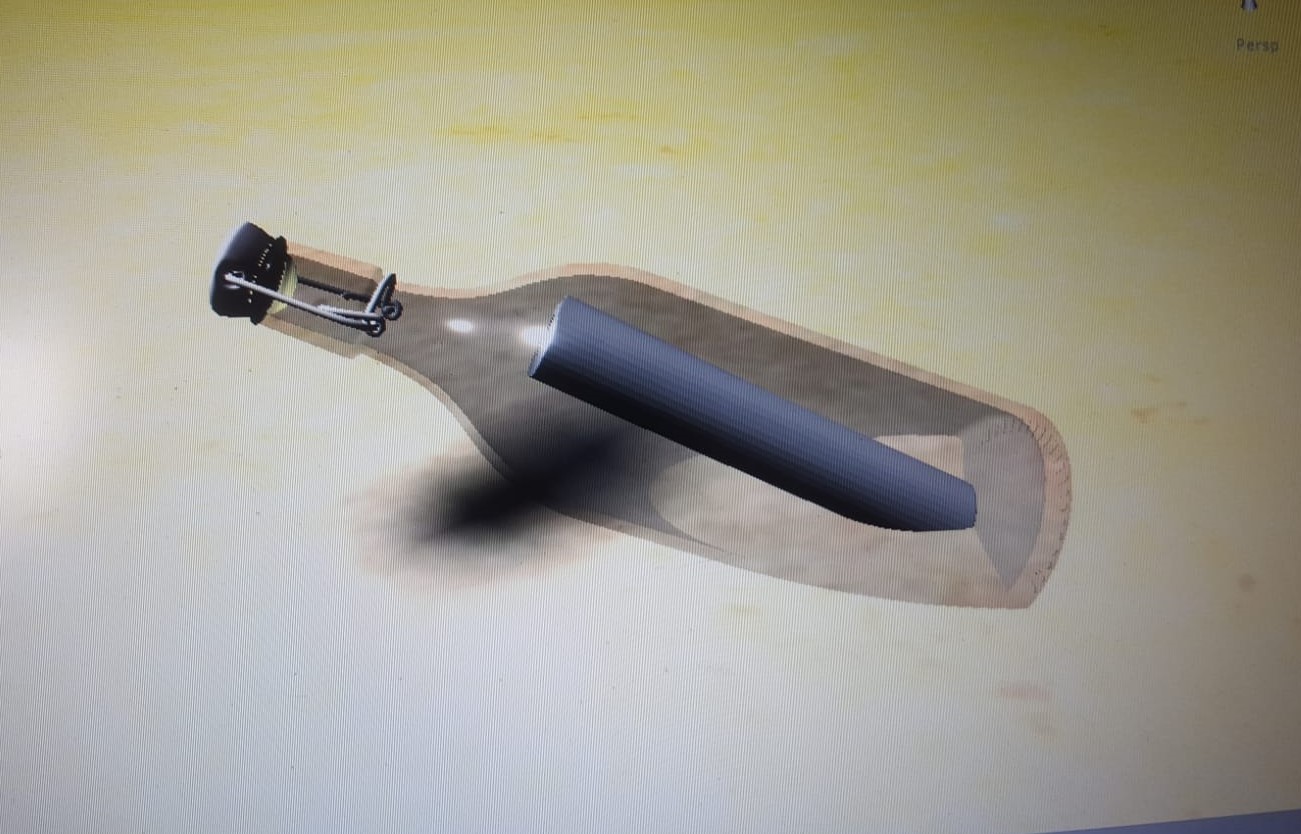

There is a story to our experience. The player starts out on land, actually. In a small, remote cabin surrounded by rocky mountains. From there, the player can move forwards into the water. Scattered throughout the environment are messages in bottles. The idea is that these messages together tell a story. We also thought about the possibility for people to leave these messages themselves, but multiplayer functionality wasn't really a must for the project. I was the only group member experienced with Unity, so I did most of the development of this application myself. Another group member also did some parts of the terrain. The rest focused on the research, bodystorming, mood boards, report, story, and presentation. For this reason, my knowledge about the story element in our simulation ends here.

Movement

Diegetic movement in Virtual Reality is always a challenge, especially with a headset like the Google Cardboard, which offers only 3 degrees of freedom and has no controllers. The movement should feel natural and give the player a feeling of control to reduce the chance of motion sickness (see Keep Your Eyes On The Road Kid for more about VR induced motion sickness), but at the same time the movement should follow some general rules in terms of boundaries, speed and efficiency. Our solution to this was to move via gaze control: Looking at a certain point for a certain period of time will cause you to make a stroke in that direction. The movement is at regular intervals, just like swimming stokes. The strokes can be interrupted by changing the angle in which you're looking. The boundaries are implemented quite well too. The boundaries on the sides of the terrain are naturally far away. The terrain typically goes up here to form a sort of wall of rocks. This guides the player away from the edges and ensures that we don't need to put any terrain behind it, since the player could never see that anyways. The bottom boundary is the terrain height at that position plus a certain margin. The player can never swim through terrain due to this restriction, but it also makes it very easy to swim closely over the surface to get a good view of the coral and sea life on the bottom. The top boundary is the water surface. You can actually breach this surface, after which you'll be floating on top of the waves. You can swim above water as well, as you're, sort of, stuck to the surface. You have to look downwards at a certain minimum angle to dive underwater again. The gaze controller also allows you to interact with the world around you. For example, you can read the messages in the bottles by staring at them for a few seconds.