Boerenkerkhof AR

My first major paid project ever was a peculiar one to say the least. From October 2019 until early 2021 I was busy creating an Augmented Reality app that would transform a graveyard into a museum of sorts. The general idea was that you could use your smartphone to scan the tombstones, and it would tell you a story about the person buried there, which was supported by 3D animations on- and around the grave. Although it may seem morbid at first glance, but the app is actually surprisingly lighthearted and educational. There is a huge variety of people with different stories to tell (e.g. A banker, a preacher, a football player, a midwife, and many more). Together, these stories will tell you a story about Enschede as a whole. Since the graveyard was used from approx. 1850 to 1950, this story will be about the so called Golden Age of Enschede, in which this region experienced rapid economic growth due to its large textile industry. The app's most interesting feature is of course the Augmented Reality, or AR for short. AR is a rather well-known technology, but applications that are available for the general consumer are still scarce. And applying AR in such a context as a graveyard, or even outdoors for that matter, is unprecedented.

Augmented Reality

Let's start with discussing the main feature of the app: the AR. This paragraph will explain how the AR works and what changes we applied to this base functionality to increase the performance in such an uncontrollable environment as an outside graveyard. This section will provide a detailed, in-depth look into the technical details so it may get a little complex at times. The AR in this app can be split into 2 distinct parts with different functions. One part is the recognizing and tracking of the graves, and the second part is actually combining the virtual and physical objects. This distinction may seem small, as you might think one automatically leads to the other, but will prove important when we get into stuff like compatibility with older devices, etc. The recognizing and tracking part will be the most prominent in this section, as it is by far the most complex of the two. It is also nice to mention that our app provides different so-called "Viewmodes", each of which uses different functionalities of the AR and gives a different experience. There are 3 distinct Viewmodes: "2D", "3D", and "Full 3D".

From Scratch...

In order to design such an app as Boerenkerkhof AR, you need to think about what information you need the AR to collect from the environment. In our case we want to know whose grave it is we're looking at, so that we know which audio files, subtitles, and animations we should load, and we want to know the approximate size, position and rotation of this grave, so we can place the animations with the correct position, rotation and size on the grave as well. We spend about two months looking at different methods of collecting this information. It was very important that the app should be as advanced and fancy as possible, so we want to prevent low-tech solutions like QR codes, etc. We looked into nearly anything you can think of and I'll quickly go over the conclusions we came to for each method.

Potential Methods

Text Recognition (OCR)

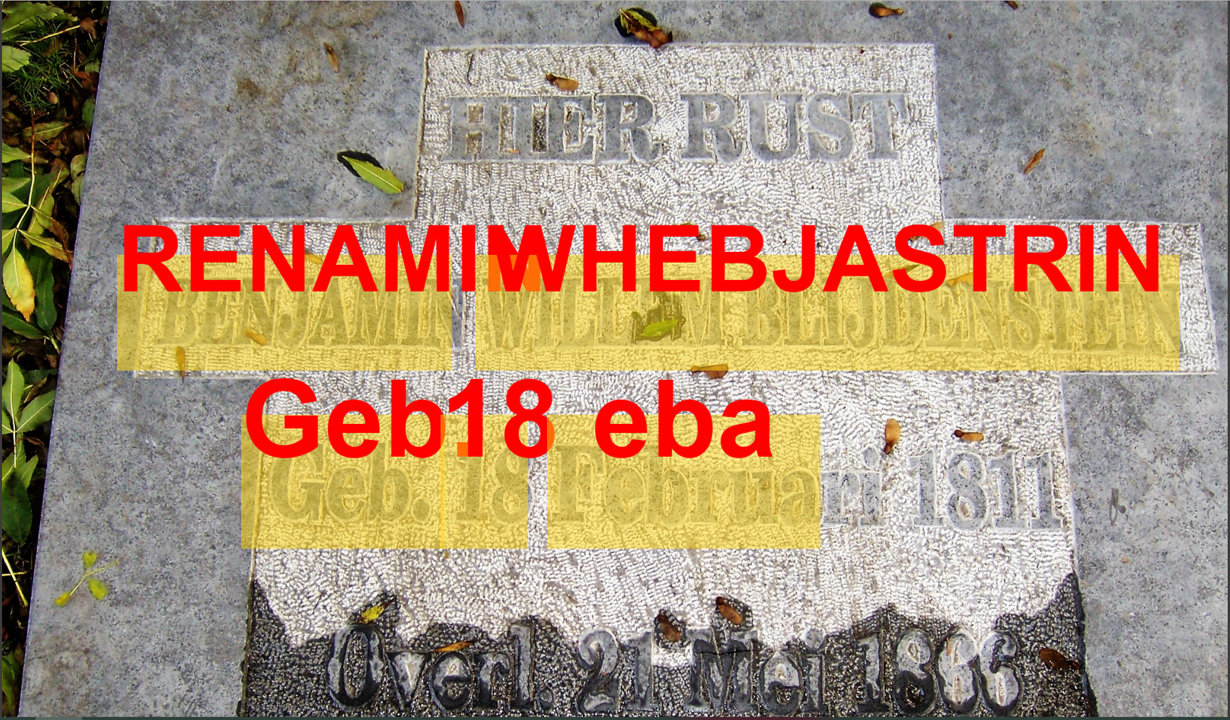

Text recognition would allow us to see who's grave we're looking at, because we can cross-reference the recognized text with a hardcoded selection of text we know is on the graves. However, the graves have a huge variety of fonts, colors, etc. making it hard to actually recognize a significant portion of the text. Especially because text-recognition software is mostly optimized for recognizing simple black on white text like a receipt. Another point to make is that the text that actually is on the graves is very similar. Recognizing the number 19 doesn't help much when most of the people died somewhere in the 1900s. Lastly, text recognition does not give us a transform (position, rotation, and scale) to place the animations, which is something we would need to find a different method for.

Flat Surface Recognition

There is software available that is able to recognize flat surfaces, like a tombstone. This one would need to be combined with another technology (like text recognition) because it can't recognize who's grave it is, but it is able to find a transform of the grave, to some extent. However, the results we found were very inconsistent. It has trouble recognizing some graves due to the engraved text, and it may also recognize the ground or another grave. Translating a flat surface to a scale also proved difficult, since it may also only recognize a part of the surface and conclude that the tombstone is smaller than it actually is. Lastly, flat surface recognition relies on things like lighting and shadows to determine a flat surface, and it needs some time to recognize a flat surface, making it rather slow. The video above displays the functionality of a prototype we made. The second half of the video combines flat surface recognition with 2D image recognition to track and scale a cube in 3D space.

Note: We actually made a functional prototype where we tracked a small Lego firetruck, but I cannot seem to find any pictures of that. So this is a screenshot from another project I worked on at the time where we attempted to 3D scan a rubber duck.

3D Object Recognition & Silhouette Recognition

3D object recognition seems ideal on first sight. It recognizes the shape of a tombstone and by doing so can determine both who's grave it is, as well as a transform for the animations. However, in its current state, 3D object recognition only works on small objects (< 25cm3), and it requires scanning the object from every angle beforehand (ideally with a white background), which would prove difficult on a graveyard. And even if it were available for larger scale objects, most of the tombstones in this app are simple, rectangular, flat stones, so they aren't very 3D or distinguishable at all.

GPS

Another method of determining who's grave a user may be looking at would be to look at the position of the user. Because we know where each grave is located and the graves don't move, of course. Being able to accurately track the user's position has more benefits than that, though. It would, for example, also allow us to help guide the user to a new grave on the map, after they are finished with one grave. It proved nearly impossible to accurately track the user, however. Google maps or other navigational tools can reduce inaccuracies by assuming you're on a road and sticking you to said road until a significant difference is measured, but we don't have that luxury on a graveyard. The accuracy was so bad that even determining which half of the graveyard you're on is not very reliable. Combine that with the fact that most of the graves from our app are clustered together, and you'll come to the inevitable conclusion that GPS is not a very helpful feature to our app.

2D Recognition / Image Recognition.

So that brings us to 2D recognition, which is the technique we eventually went with. It is similar to 3D Object Recognition in functionality, but instead of a 3D shape, it recognizes a 2D texture. And although it is certainly not a perfect fit for the task at hand, we considered it to be the lesser evil, so to say. More about 2D recognition in the next section.

Image Recognition

The Boerenkerkhof AR app uses 2D image recognition recognize a grave and find a transform for the animations. There are a number of AR image recognition plugins and libraries available in Unity (Vuforia, ARCore, ARKit, ARFoundation, etc.), but they all work in a similar fashion. For our app, the plugin of choice was ARFoundation, for the simple reason that this was the only one that would work reliably on both Android and IOS devices. 2D image recognition works as follows: You create what is called a ReferenceImageLibrary, which is a collection of images that you're looking to recognize. You then compare the camera feed from your device and compare that with each image. When you find an image, you know who's grave it is, because you know which image you recognized from your ReferenceImageLibrary, and you also know its transform, because you specified the size of the reference images in the ReferenceImageLibrary as well. The way that these images from the ReferenceImageLibrary are compared to your camera feed is also important: They are not compared as images, but as point cloud maps (PCM for short). Basically, what happens is that a point is placed on every place of interest on the image. Think about sharp angles, high contrast, and edges. This makes comparing the images to the camera feed far more efficient.

Challenges

Of course, 2D image recognition isn't perfect, and we did encounter some problems and challenges, which are discussed below.

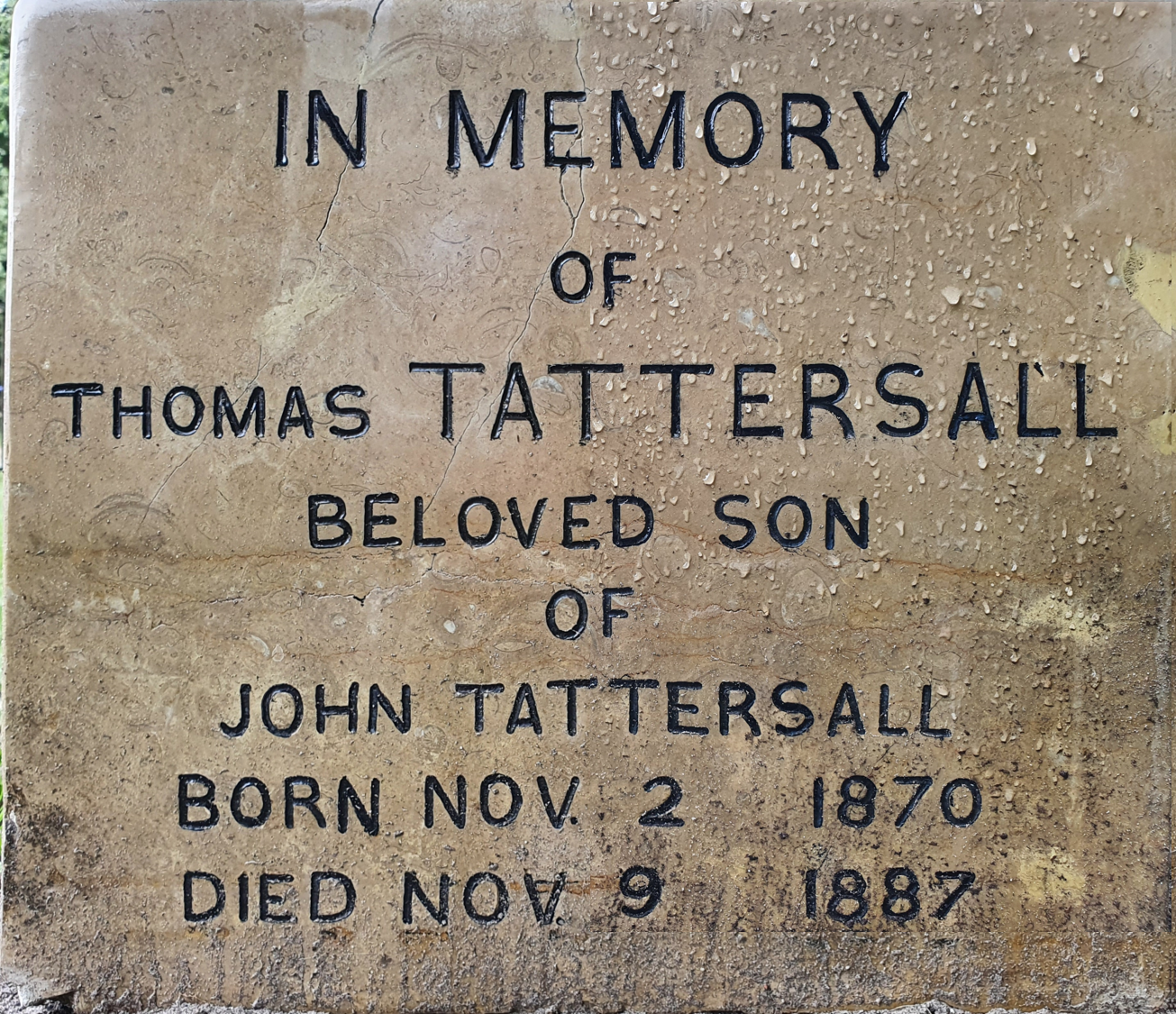

The image above consists of two photos I took from the grave of Tommy Tattersall, digitally combined. One of the photos was taken shortly after it had rained. Although for us it is clear that this is still the same exact grave, a computer does not have a concept of what a grave is; it just sees pixels. It is very difficult to distinguish the relevant information (Stone color, text, etc.) from the irrelevant (Water droplets, dirt).

Visual Obstructions

So, as said before, the 2D image recognition compares the camera feed with a series of reference images (or rather the PCM of both). But in order to have a match, the camera feed must resemble the reference image. Things like dirt, branches, or shadows on the grave hinder this, since the app can not distinguish between a point on the PCM created because of a misplaced branch or shadow on the grave, and a point that is supposed to be there. This problem is amplified by the fact that the majority of tombstones in our app are flat, laying stones, where dirt does not automatically fall off. Depending on the type of stone used, some graves also start reflecting when they're wet, which of course also causes problems. This, above all other reasons, is why it is difficult to make an AR application for such an uncontrollable environment as outside. In order to tackle this, and also the problems mentioned below, each grave has more than one reference image. Some graves may have up to five reference images. Each of these images is taken at a different time of day and is cropped differently. This increases the probability that, if one reference image is not recognized because of, let's say a branch on the grave, another reference image which has the part where the branch is located cropped off, can still be recognized. What also helps is that when tracking is lost (so a frame when the grave texture is not recognized), the app falls back on the accelerometers and gyroscope of your phone to keep the animation objects in approximately the same position in 3D space.

Engraved Text

Since we use 2D image recognition, the app is looking for a certain 2D image. The problem with this is that engraved letters are of course 3D and looking at them from a different angle changes the total image as a whole (see the Gif for a visual albeit exaggerated example). The reference images are taken from a 90 degree angle of the graves, to ensure the size can be determined correctly and is not shifted because of the perspective. But most people won't be looking at the graves from this angle, which can cause problems trying to recognize the grave. It is also worth noting that in some cases, because the letters are engraved, they are not highlighted in a different color. This means that the overall contrast of the grave is lower and the PCMs of the reference images themselves have less points to work with, also making the grave harder to recognize. More information about what makes a reference image good or bad can be found here.

Ambient Light

As stated previously, shadows on the graves can be a problem for the AR recognition. But dim lighting can also be a problem, as it becomes harder to see contrast in a darker environment. This problem was ignored for the most part, arguing that people would only use this app during the day and with somewhat nice weather (since it requires you to be outside). But as a small help, we allowed the user to keep control of their flashlight, which can be used to illuminate the graves.

Grave Orientation

I complained about the majority of the graves being flat, horizontally oriented stones. But not all of them are. Tattersall, for example, is a standing grave, and Schukkert's grave is at a approx. 45 degree angle. The problem that arises here is that the AR functionality of this app only recognizes the reference image and will place the animations on top of those, meaning that they will be oriented incorrectly for some graves. Adding to the complexity is that the orientation of the animations is handled differently depending on which Viewmode is used. This problem was solved by hardcoding a specific angle with which the animation prefab is rotated when it is spawned in, when using the "Full 3D" Viewmode.

Performance Enhancers

Lastly, we added some general performance enhancing tweaks to the code. This was done simply because trying to cross reference the camera feed to a series of images 60 times each second is quite a lot to do for a simple phone with limited battery life. These tweaks are designed to be invisible to the user, such that they do not negatively impact their experience or take away from the "magic" that is AR. First and foremost, we disable the AR searching or tracking when it is not needed. For example, when the user has the map open, or uses the "2D" Viewmode. We also keep track of which graves have been visited, and the reference images for these graves are only compared with the camera feed ever X frames, rather than every frame. This makes it harder to recognize these graves again but makes recognizing other graves more efficient. This is because we assumed that people would only visit each grave once. The same thing is done based on which grave you last visited, but only for a minute or so. We assume that people choose their grave to visit based on, among others, proximity. This means that graves that are closer to the last visited grave have a higher chance of being visited. So for a preset amount of time after visiting a certain grave, the graves that are further away only get compared to the camera feed every other frame. When a grave is recognized, the camera only looks for reference images of that specific grave, until the player is closed again. This greatly improves the tracking of the currently relevant grave, and prevents the wrong grave popping up mid-animation.

AR Viewmodes

As mentioned before, the app has 3 different Viewmodes: "2D", "3D", and "Full 3D". And they each behave slightly differently. The original reason for this was compatibility: We want our app to work on most devices, but older devices may not support ARFoundation. This is where the distinction between the two parts of Augmented Reality becomes really important. Because you don't need ARFoundation to achieve AR, and "2D" mode can achieve the AR experience without it. The three different Viewmodes and their differences are described below, ordered by complexity.

Full 3D

"Full 3D" mode is the most complex of the three. This mode is the most similar to the AR experience you might envision based on the information above. This mode is reliant of ARFoundation and uses this to recognize the graves. The animations are then placed on these graves and you could walk around them and look at them from different angles. It is truly like the virtual objects are in physical 3D space. In theory, this mode will provide the best experience, but it is also the most susceptible to the problems mentioned above. This is because, when using this Viewmode, the app will attempt to recognize the grave every frame, which is nearly impossible. It is also the least energy-efficient because of this. The demo video provided shows an example of what using the app with this viewmode would look like. What's interesting to note is that at some points (especially noticeable during the part with the horses around timestamp 2:20) the app loses tracking of the image. The app will then fall back on using the accelerometers and the gyroscope of the device to keep the animation on approximately the same position in 3D space as it was when the image was last recognized.

3D

"3D" mode is a simplified version of "Full 3D" mode, made to give a similar AR experience without much of the problems with tracking or recognizing the grave. "3D" mode still recognizes the grave using ARFoundation, but once it did, it will stop searching or tracking. Rather it will place the animations on a fixed anchor point relative to the camera. This means that if you move your phone around, the animation will remain in the same exact place on screen, and you can only look at it from that perspective. This perspective, however, is designed such that, if you stand right in front of the grave, hold your phone at a normal angle, and don't walk around, you get the exact same experience as you would've with the "Full 3D" Viewmode. This is of course a bit harder for this specific grave, since it has a fence around it, preventing you from standing straight in front of the grave. It should also be mentioned that the difference between Full 3D and regular 3D and 2D in terms of tracking is mitigated by some general AR principles. For example: The experiences start to differ when you do not hold your phone still. But if even using Full 3D mode, you cannot look around too far, or the tracking image will move out of the view of the camera and you'll lose tracking. The experience is also different when walking around the grave, but this is generally advised against for safety reasons, as AR may make it difficult to distinguish between physical and virtual objects.

2D

The "2D" Viewmode is similar to the "3D" mode, in that it places the animations at a fixed anchor point relative to the camera. The only difference is that, instead of recognizing the graves using ARFoundation, in "2D" mode, you can simply select which grave you want to visit from a list. This means that devices that are too old to work with ARFoundation can still use our app, but only in this mode. This mode also serves as a fallback for when the other two modes aren’t working, because the graves are too dirty for example. You can switch between the modes at any time, given that your device supports ARFoundation. The fact that this mode does not attempt to recognize or track the graves in any way, means that you do not necessarily need to be on the graveyard to use it. You could, theoretically, just be at home using the app in 2D mode. However, it should be noted that the animations were designed for AR, meaning that there are, for example, scripted moments when nothing is animated but instead the user is meant to look at the grave itself.

The Map

So, after an elaborate discussion of the AR functionality, let's have a short look at some of the simpler features this app has to offer. Starting with the map. Not every person buried on the Boerenkerkhof made it into our app: Only a select few with interesting stories made the cut. That means that not every grave you might encounter can be scanned with the app. In order to help guide the users to the correct graves, our app includes a map on which these graves are highlighted. You can zoom and scroll by pinching and swiping. You can also flip the map upside down such that the entrance you entered through is at the bottom of the screen. The visitable graves are highlighted in red and are interactive. If you click on one, it will show you a pop up which gives some information about that person that can help the user determine whether or not they want to visit that grave. Visited graves will turn green, and you can switch the visited state at will, so you can mark an unvisited grave as visited and vice versa.

Handmade Animations

A lot of people have asked me: "Can this idea be applied to other graveyards as well?" My answer to them is always the same: 'Of course, the framework is there, but you'd need new reference images and animations'. I wasn't very involved with making the stories, that was the responsibility of Ben Snijders and Georges Schafraad from the SHSEL. But I did make the majority of the animations. The animations are designed to support the story told to you, and not replace it. These animations are unique for every person and require quite a lot of work to make (about 10 hours each after practice). For this reason, this idea may prove difficult to scale and implement elsewhere. The provided video shows most of the animations currently in our app. The video was shot using an emulated computer version of the app, so instead of the blue background, envision that the animations are placed upon the graves.

In-App Tutorial

As is undoubtably clear by now, this app is quite complex, as any cutting edge technology app would be. I've tried to make the app as easy to use as possible, but the developer can only do so much. In order to ensure people understand how the app works, we included a tutorial that explains everything they need to know. The tutorial can be accessed at any time since I know that most people tend to skip it the first time, and only fall back on it when they encounter a problem. In order to prevent this at all, the app starts up with "2D" mode selected by default, since this is the easiest mode to use. And it will also give you a button prompt to open the list of graves the first time you start the app in this mode. This will hopefully guide the user to a smooth experience with, or without the tutorial.

No happy ending

Unfortunately, I could not finish this project myself. Towards the end of 2020, I was struggling a lot with the publishing part. I had trouble with making the app compatible with older Android versions and also had issues with publishing to IOS, as it requires you to own a ton of expensive Apple hardware, which I do not. I spend a lot of extra time on the project, and this conflicted with my own study. So, unable to finish this project myself, I convinced my friend Koen Vogel to take over. He had helped me with the development of the AR functionality before, and already had an in-depth understanding of the app. I would've loved to see this project through to the end, but sometimes it just doesn't work out like that. I did learn a lot from this project, especially the stuff that went wrong. Most of it was caused by very fundamental decisions early on, which I should have been more clear and knowledgeable about in that state of the project. Luckily, I am now. So incidents like this won't happen again.

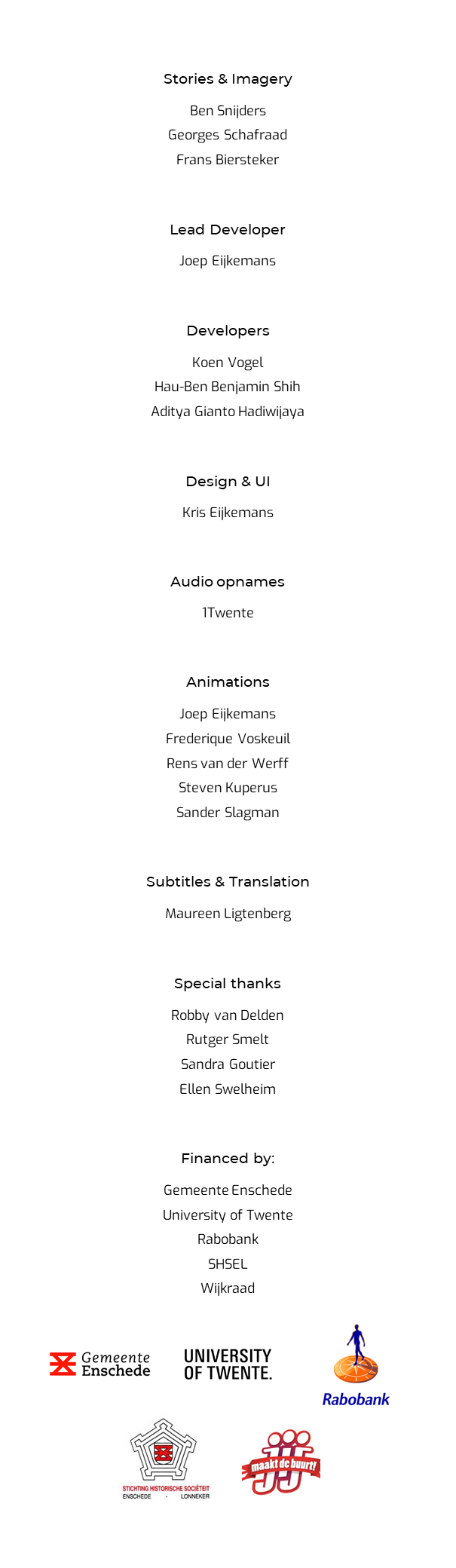

Where credit is due...

This page has gone on for far too long already, but before I end it, I would like to give credit where credit is due and thank everybody that helped make this project possible. This list of contributors and sponsors can be found in the app as well.